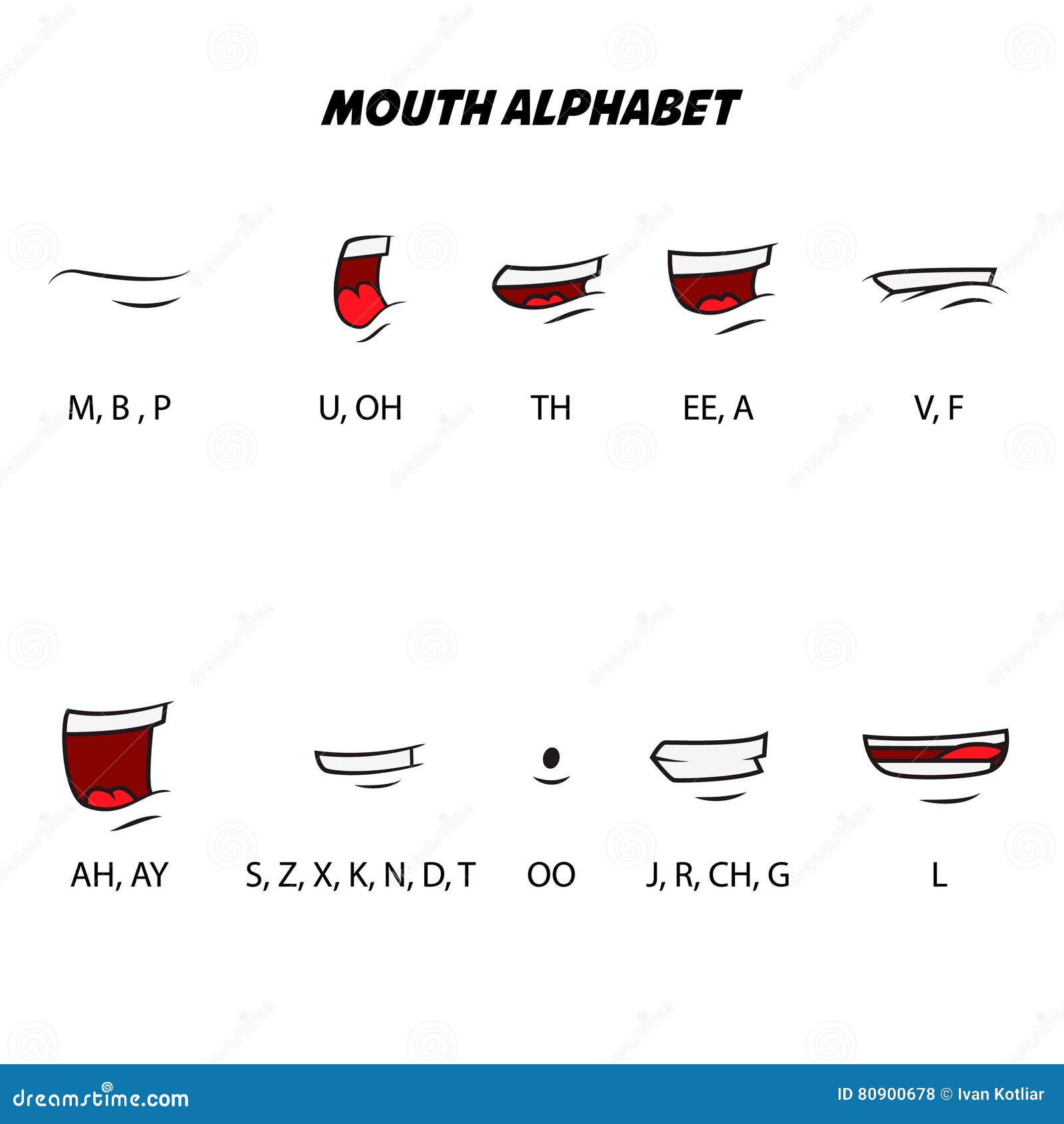

Selecting a region changes the language and/or content on. You can borrow a mouth for one character and use it on another or make new mouth sets entirely. You can use Adobe Sensei AI technology in Character Animator to assign mouth shapes or visemes, to mouth sounds or phonemes. Mouth shapes are Adobe Photoshop and Illustrator documents. This goes a long way to showing what a character is thinking or feeling.Įxcept for the neutral, smiling, and surprised expressions, which come from the camera, everything that drives mouth shapes in Character Animator comes from the audio. You can tweak how long mouth shapes last and how exaggerated they are.

In either case, you can customize and alter specific mouth shapes in Character Animator or manually assign certain mouth shapes to the puppet at particular times in the recording. After going through this tutorial, you should be able to create an animated character with head tracking, responsive eyes and eyebrows, realtime lip sync, and a body with draggable limbs. Upload prerecorded audio and sync it with an existing puppet, while adding gestures and other movements manually. This is a simple walkthrough of creating a basic character in Adobe Character Animator CC.Control an animated character in Character Animator, known as a puppet, with your own movements via a camera.There's a few ways to fix those depending on whether you want the extra mouth animations or not.You can use Adobe Sensei AI technology in Character Animator to assign mouth shapes, or visemes, to mouth sounds, or phonemes.

Without that behavior, though, nothing gets displayed. This can be useful so that the Aa, Uhh, and W-Oo mouths get a little bigger when those visemes are held a bit longer. When it is attached to the group for a mouth and is triggered it starts showing the layers in sequence. Adobe Character Animator Tutorial Lesson 38 Compute Lip Sync with Audio. Adobe Character Animator Tutorial Lesson 37 Mouth Shapes for Lip Sync. On the other hand, if you select those groups in Blank face, there should be Cycle Layers behavior attached. Adobe Character Animator Tutorial Lesson 37 Mouth Shapes for Lip Sync Lesson With Certificate For Graphic. Right now, when the mouth behavior triggers those groups, their layers are all hidden (no eyeball on those rows). With 3/4 side views and head locks triggers, gestures, walking, and plenty of mouth. Your Aa, Uhh, and W-Oo mouths are folders with layers inside them (2, 2, and 3, respectively). An advanced puppet for Character Animator with precise animation control. Sure, just the folder (with layers inside) versus the layers themselves (like the other visemes: D, Ee, F, L, etc which just have 1 layer). The puppet itself does not have any behaviors attached to it.Ĭould you explain what you mean by group vs single layers? Maybe that will provide clues as to what is wrong? If you look at the properties panel for the lipsync behavior on the puppet, there's a section that lists which layers matched for each viseme. Visemes being mutually exclusive, it seems more likely that one of them is a cycle layers animation (like what Alan asked about above) that is finishing and running out frames (instead of being set to hold on last layer) or a mistagged item causing one of the mouth shapes to be missing. I'd be curious to see the puppet's mouth rigging. We've considered whether we might get better results in some cases by informing it of the target rate, but haven't done those experiments yet.Īll that is to say that I don't think the misaligned visemes is the key part. The lip sync behavior just takes the value that's active at the middle of the frame. For some framerates, it's even possible for it to generate a viseme in an interframe gap that is never displayed. The lip sync engine actually operates at 80hz so its outputs are not necessarily aligned to frame boundaries.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed